Table of Contents

Share this article

An article recently appearing on Business Insider, "The Algorithmic Apocalypse,” by Ed Zitron, predicts a widespread societal dystopia as a result of AI and automation. Mr. Zitron brings up some excellent points about the potential adverse effects of automation, and we’re right to be concerned about some of them (see the second half of this post).

Unfortunately, Mr. Zitron’s article is more a collection of cherry-picked anecdotes than a balanced, well-thought-out piece. It overlooks many of the positive historical effects of technology advances enabling more automation and efficiency.

For example: drastically reduced energy consumption relative to GDP in the USA since 1975, huge improvements in the quality and capabilities of goods like cars and electronics, and the ability of the country to feed itself with far fewer resources every decade.

The reality is that with any automation there are winners and losers among both providers and consumers. Sorting through the net effects can be complicated––and rife with ethical and moral issues. For example, Mr. Zitron links to this study about AI replacing doctors in a pejorative manner. But he doesn’t mention the actual conclusion of the study: Patients preferred the AI! In fact, the study’s authors write, "In a system this poor, AI could actually be an improvement.”

It's not a stretch to say that the non-wealthy already cannot get medical care at a remotely reasonable price.If AI can provide help to people trying to navigate a wildly inefficient, insanely expensive, and borderline corrupt US medical system, is it ethical to withhold this technology from them?

Has Amazon (mentioned in the article), in all of its relentless efficiency-seeking, put smaller sellers out of business? Almost surely. But it also made shopping really, really convenient, and enabled us to get a very wide variety of niche products much more quickly and cheaply than we could before.

Furthermore, Amazon has allowed some niche sellers to expand their audiences enormously via its distribution. It's hard to net out the pros and cons.

By the way, if you're anti-Amazon, there’s a very simple way to express that: Boycott it. Judging by the stats, this is not something most Americans do: Amazon has 230 million U.S. users, or 89% of the number of U.S. adults. Don't like Google (also mentioned in Mr. Zitron’s article)? Don't use Google maps or its search engine.

None of this is to say we shouldn’t be concerned about the disruptive effects of automation and AI on ordinary people. In fact, at our company (Austin AI), we think about it every day.

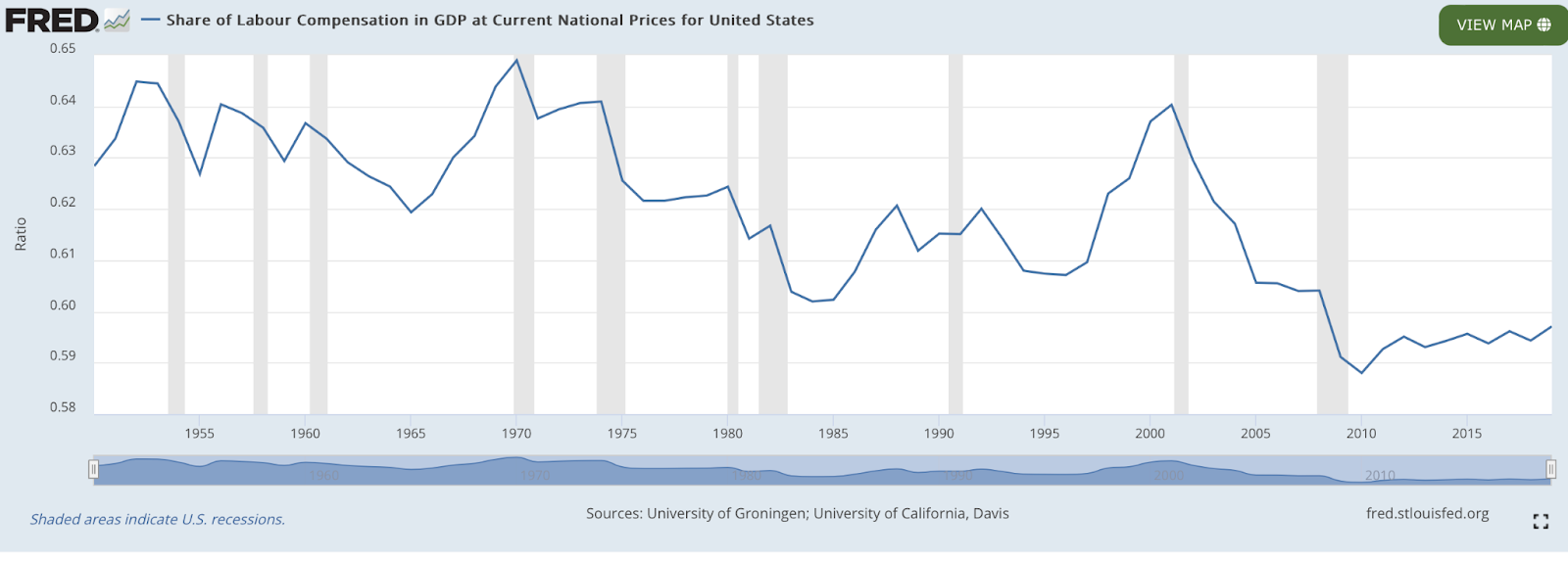

Amongst many other things, we need to monitor the returns to labor versus capital: That is, the share of income captured by humans versus corporate entities or financial capital. This share, recently at 60%, is indeed at generational lows for many countries, including the U.S.

But it hasn't changed that much over the past 100 years on an absolute basis, and it's strangely increased since 2010.

There remains considerable debate about why this is the case. But to the extent that technology could ever make human labor not fruitful enough at the end of the day, obviously massive policy debate and change should be in order (in the areas of tax, antitrust, welfare, trade unions and labor laws, etc.).

The Decline of Human Judgment

So much for my quibbles. Let’s take a closer look at what I believe is the author’s strongest point: The idea that human judgment is becoming rare or scarce.

This idea certainly resonates with me––though I don’t worry about it so much in the realm of business. I worry about it the most in the domains of social interaction, journalism and the media, social sciences, music, and art. It’s in these areas that it seems that more organic, natural, exploratory, skill-based and frankly, honest ways of proceeding are being replaced by whatever shortcuts some algorithm which is clearly designed to maximize revenue, suggests.

Why is this happening? It’s because, as the article correctly says, too many actors are trying to "game the system.” The result "an ouroboros of mediocre, unreliable information.” Incidentally, this kind of algorithmic gamesmanship also happens because of misaligned financial or legal incentives structurally within an industry, although that's a different story.

However, I would take issue with the article's claim that the tech industry––and presumably by association the AI/automation technologies the tech industry developed––are completely to blame. Like any other commercial industry, the tech industry will follow the money by responding to the demands of its customer base.

Yes, the tech industry has succeeded in finding exponentially increasing new efficiencies in automating and delivering information. But there’s a much larger and (mostly) separate issue we need to grapple with: People remain rabid consumers of algorithms designed to spoon-feed their lives to them.

Do Computers Really Control Our Lives?

Mr. Zitron’s claim that "computers already control your life” somewhat overstates his point.

Yes, computers and algorithms are exerting quite a bit of influence. But computers, companies, or other people for that matter, are likely going to exert whatever degree of control over us that we allow them.

At least until we have artificial general intelligence, or AGI, computers do what their human programmers instruct them to respond to, and consumers must realize.Well, computers—or companies—or other people—are likely to control one’s life to the extent that one lets them. At least until we have AGI, computers do what their human programmers instruct them to respond to, and consumers must realize that their preferences (and ultimately purchases) play a pivotal role in the ecosystem.

What Can Consumers Do?

So what can consumers do? We have a simple option to influence these dynamics: Demand higher quality.

Demand less biased, longer-form, more accurate, and more serious sources of news and information.

Seek social interactions which are perhaps fewer in quantity but deeper and richer in quality.

Let a company know when its automated customer service bot sucks, and switch to a different provider. Even if it’s more expensive.

Buy an actual book instead of just Googling the answer to something.

Take up a non-electronic hobby.

Watch instructional videos about a topic instead of 15-second clips.

Spend a little more to buy higher-quality, longer-lasting products.

What Should Tech Industry People Do?

And as for technology providers (of which I am one!), we should constantly bear in mind that while automation saves money, it may or may not come at the expense of quality. To the extent that it does, we need to realize that automation has made the offering become less functional and competitive. That’s likely to matter both to the consumer and the company.

To the extent it doesn’t, we should acknowledge that capital saved by automation doesn’t necessarily need to be paid out as dividends—rather, it can be re-invested in R&D, quality improvements, employee retention, or new ventures or products.

To anyone skeptical that a business would do so, note that U.S. R&D spending as a percentage of GDP has never been higher. And since the late 70s, it’s been private industry driving national investment in R&D.

Still, there will always be a risk of well-intended automations causing unintended consequences and harm, as in the implosion of Knight Capital (mentioned in the article). Sometimes, software bugs, models unable to handle edge cases, or poor forecasting can cause the robots to take action that a human would deem obviously unacceptable.

Unfortunately, the conversation in media is skewed towards reporting extreme situations (hypothetical or actual) and away from common-sense discussion about how to avoid this.

And part of that is the algorithm at work, too. If it bleeds, it leads, after all. That long-tested and true maxim of journalism from generations ago is certainly embedded deeply in our online news platforms and social media.

But from our experience, here are a few things that can the needle in a more positive direction:

- Quality Engineering. Engineers––software and otherwise––understand how important this is. Bugs and bad code can torpedo a system in very quick order. Good code doesn’t crash, is human-interpretable and organized well, and will be robust against edge cases.

Testing is paramount. Sometimes, non-technical people view coding as like a language—either you speak it or you don’t. The reality is that, as with any other skill, there are a very wide range of coding abilities and styles. Good software development principles go very far in the ultimate effectiveness and robustness of a model.

- Parsimony. Don’t over-engineer or over-complicate a model or its inputs. If your neural network says something drastically different than straight regression analysis, maybe it’s picked up on non-linearities. Or maybe it's just over-fit and a bad forecaster.

Google’s #1 rule of machine learning is to start with heuristics instead of machine learning! Complication can make a model better… but it can also make a “black box” which confounds interpretation and understanding of how it will behave.

- Focus. Focus on the ultimate human goal at hand. This is related to the last point but is more high-level. Don’t do things for solely technical reasons unless there’s a good reason to. Prioritize technical decisions based on how likely they are to advance the effectiveness of the model for humans.

- Proper Use of Model Stats. Know a model’s accuracy and consider what an acceptable rate is for the application at hand. A model with a 55% accuracy rate is acceptable in the stock market… and woefully inadequate in airline safety.

Also, understand biases in modeling: If you’ve run 1000 models to get one with 99% statistical significance, it may not be as strong as you think.

- Anticipation. Developers and the executives who supervise them should constantly ask themselves, “what am I missing?”

Don’t be overconfident about anything, but constantly wargame about what can go wrong, in terms of downstream and second-order effects.

This could be more art than science, but we can start with the technical side. What happens when a user enters the wrong information, or when one part of a multi-part system fails? How do the effects cascade?

- Security and Boxing, Who has access to what? Unauthorized users should be boxed OUT of any system for obvious reasons. What’s less obvious is that AI systems should be boxed IN to only have access to the resources they need.

- Incentives and Alignment: See the prior two bullet statements about long-termism. In addition, researchers have rightly attempted to “align” modern models like LLMs to better meet human objectives. Unfortunately, the topic of alignment has already become political.

- R&D. Focus on how new technologies will be used in the real world rather than engage in a pure arms race solely designed to increase model power and capability.

- Common sense: Use it.

In sum, despite exponential advances in automation, machine learning and AI, society needn’t collapse into an “algorithmic apocalypse.” However, to give us the best chance of avoiding a real technology-instigated disaster, we, its human constituents, must remain vigilant.

We must remain proactive participants in the debate around how, where and why to deploy, control and consume these technologies.

Nuance in every area is incredibly important, and human judgment and involvement must not become, as the article fears, a scarcity. Rather, it should be the primary filter through which all potential automations are considered.

During every step of the AI development and implementation processes, we must keep front-of-mind that technology’s primary purpose is to advance human and societal causes and make decisions accordingly.

Robert Corwin is the founder and CEO of Austin Artificial Intelligence, an AI, machine learning, and process automation tech company in Austin, Texas.